Problem 1 (Unbiasedness): Let \(Y_1, Y_2, \ldots, Y_n\) be i.i.d. from a distribution with mean \(\mu\) and variance \(\sigma^2\). Show that \(S^2 = \frac{1}{n-1}\sum(Y_i - \bar{Y})^2\) is an unbiased estimator for \(\sigma^2\). (Wackerly Ex. 8.1)

Problem 2 (Bias & MSE): Suppose \(E(\hat{\theta}) = 2\theta + 1\). Find \(B(\hat{\theta})\) and construct an unbiased estimator \(\hat{\theta}^*\) from \(\hat{\theta}\). (Wackerly Ex. 8.3)

Problem 3 (Comparing Estimators): \(\hat{p}_1 = Y/n\) and \(\hat{p}_2 = (Y+1)/(n+2)\). Derive \(B(\hat{p}_2)\), \(\text{MSE}(\hat{p}_1)\), and \(\text{MSE}(\hat{p}_2)\). For which values of \(p\) does \(\hat{p}_2\) dominate? (Wackerly Ex. 8.17)

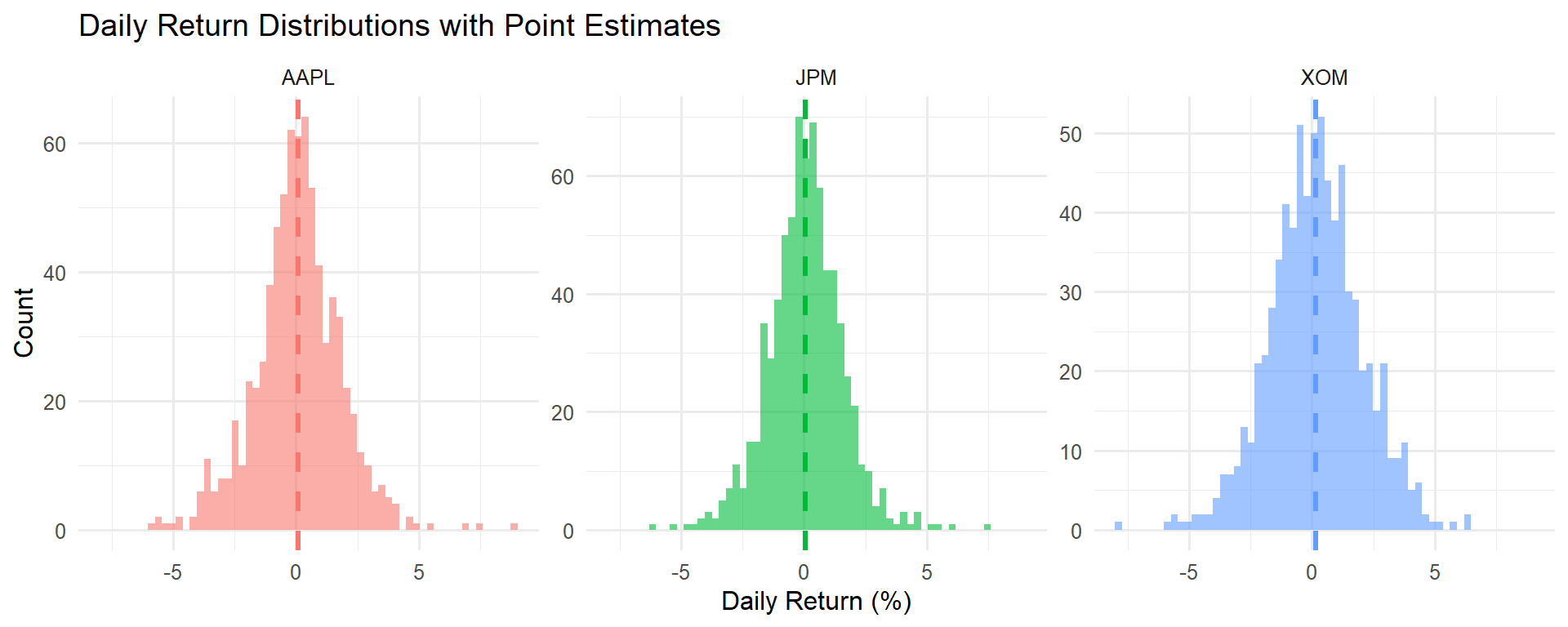

Problem 4 (Financial Application): An analyst observes \(n = 252\) daily returns of a hedge fund with \(\bar{y} = 0.06\%\) and \(s = 1.2\%\). (a) Give a point estimate of the true mean daily return. (b) Place a 2-SE bound on the error. (c) Annualize your estimate (×252) and interpret.